The proliferation of artificial intelligence (AI) in decision-making processes across various sectors—ranging from healthcare and finance to criminal justice and hiring—has raised important questions about the fairness, transparency, and accountability of AI systems. As these systems become increasingly integrated into everyday life, ensuring that AI makes decisions in an ethical, unbiased, and understandable manner has become a primary concern for both companies and governments. This article explores the challenges and strategies for ensuring the fairness and transparency of AI decision-making, the role of regulation and corporate responsibility, and the global efforts being made to address these critical issues.

1. The Importance of Fairness and Transparency in AI Decision-Making

AI decision-making systems are designed to analyze vast amounts of data and make decisions that would be too complex for humans to handle efficiently. While AI systems have proven to be highly effective in fields such as medical diagnosis, fraud detection, and customer service, their reliance on complex algorithms and data models often makes the decision-making process opaque and difficult for users to understand. This lack of transparency and potential biases in AI models have spurred concerns about the fairness and ethics of these technologies.

1.1 Fairness in AI Decision-Making

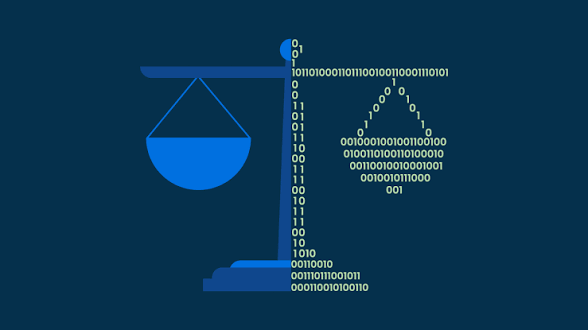

AI fairness refers to the idea that AI systems should make decisions that do not favor any particular group of people over others, especially in sensitive areas like hiring, law enforcement, and lending. Bias in AI is a critical issue because it can perpetuate existing inequalities. For instance, if an AI model is trained on biased historical data, it will likely reproduce these biases in its predictions and decisions. The fairness problem is especially prominent in situations where AI systems make decisions with significant consequences, such as determining a person’s eligibility for a loan, parole, or a job opportunity.

In the criminal justice system, for example, predictive algorithms used to assess the risk of reoffending have been criticized for disproportionately targeting marginalized communities. Similarly, facial recognition technology has been shown to exhibit racial bias, misidentifying people of color at higher rates than white individuals. These instances highlight the need for AI systems to be fair and unbiased to prevent exacerbating societal inequalities.

1.2 Transparency in AI Decision-Making

Transparency in AI decision-making refers to the ability of stakeholders—whether they are end-users, regulators, or the general public—to understand how and why an AI system made a particular decision. The “black-box” nature of many AI models, particularly deep learning systems, makes it difficult to interpret the logic behind decisions. This lack of transparency undermines trust in AI technologies, as users cannot easily verify if the system is acting fairly or making decisions based on discriminatory factors.

For example, in hiring processes, an AI system might be used to screen resumes and select candidates, but without transparency, it is difficult for applicants to understand why they were rejected. Moreover, if the AI system was influenced by biased training data, it could be unknowingly perpetuating discrimination, leading to negative consequences for both individuals and organizations.

To address these concerns, AI transparency is crucial for ensuring accountability, building trust, and enabling stakeholders to challenge unfair decisions. However, achieving full transparency in AI systems is complex, as many models involve intricate layers of computation that are difficult for humans to interpret.

2. Challenges in Ensuring Fairness and Transparency

Ensuring fairness and transparency in AI decision-making is not a simple task, and several challenges must be overcome to achieve these goals.

2.1 Bias in Data and Algorithms

One of the primary challenges in ensuring fairness is the presence of bias in the data used to train AI systems. AI systems learn from historical data, and if this data reflects existing biases—whether they are racial, gender, or socio-economic biases—the AI model is likely to inherit and perpetuate those biases. For example, if a hiring algorithm is trained on historical hiring data that reflects gender or racial disparities, the AI model may unfairly favor male or white candidates over female or minority candidates.

Addressing bias in AI requires careful attention to data collection and preprocessing. Data scientists and engineers must ensure that the data used to train AI systems is representative and free from discriminatory patterns. This involves identifying and mitigating biases in data sources, employing techniques like data augmentation, and ensuring that data sets are diverse and inclusive.

2.2 Lack of Explainability

The complexity of many AI models, especially deep learning algorithms, often makes it difficult to explain how decisions are made. This is particularly problematic in high-stakes applications where users and stakeholders need to understand the reasoning behind AI decisions. For instance, in healthcare, an AI system that determines the appropriate treatment for a patient must be able to provide clear explanations for its recommendations, as healthcare professionals and patients need to trust the system’s decisions.

Efforts to address this challenge include the development of explainable AI (XAI), which aims to make AI decision-making more interpretable. XAI techniques focus on creating models that are not only accurate but also transparent in their reasoning. These methods include simplifying models, generating explanations for predictions, and visualizing how certain inputs influence outputs. However, explainability often comes at the cost of model accuracy, which can be a significant trade-off in some applications.

2.3 Regulatory and Legal Challenges

As AI technologies continue to evolve, governments and regulatory bodies face significant challenges in developing appropriate laws and regulations to ensure fairness and transparency. One of the key challenges is the fast-paced development of AI technologies, which often outpaces regulatory frameworks. Existing laws may not be adequate to address new issues arising from AI systems, such as the use of biased algorithms in hiring or policing.

Moreover, there is currently no global consensus on AI regulations, and different countries have taken varying approaches. The European Union, for example, has proposed the Artificial Intelligence Act, which seeks to regulate high-risk AI applications and ensure that they comply with ethical guidelines. In contrast, the United States has largely relied on self-regulation by tech companies, with some state-level initiatives addressing specific concerns like facial recognition and algorithmic bias.

Creating regulatory frameworks that can effectively address AI’s fairness and transparency challenges will require international cooperation, collaboration between governments, industry, and academia, and the development of flexible, adaptive laws that can keep pace with technological advancements.

3. Strategies for Ensuring Fairness and Transparency

To address the challenges of fairness and transparency in AI decision-making, both companies and governments must take proactive steps to ensure that AI systems are designed and deployed ethically. Several strategies can help achieve these goals.

3.1 Ethical AI Design and Development

The foundation for ensuring fairness and transparency in AI begins with ethical design principles. Companies must prioritize fairness and transparency when developing AI systems by adhering to ethical guidelines that emphasize non-discrimination, accountability, and transparency. Ethical AI design should include:

- Bias detection and mitigation: Regular audits of training data and models to identify and correct biases.

- Diverse teams: AI development teams should be diverse in terms of gender, race, and background to avoid unintentional biases in design and decision-making.

- Stakeholder involvement: Engaging diverse stakeholders, including marginalized communities, in the design and development process to ensure that AI systems address a wide range of perspectives.

3.2 Explainable AI and Transparency Techniques

Explainability and transparency should be integral to the design of AI systems. To enhance transparency, companies can adopt explainable AI techniques that allow users to understand how AI systems make decisions. Some methods to increase transparency include:

- Feature importance: Providing explanations about which features of the input data were most influential in the decision-making process.

- Model simplification: Using simpler, more interpretable models where possible, even if it means sacrificing some predictive accuracy.

- Visualizations: Creating visual representations of how AI models make decisions to help users understand the process.

3.3 Regulatory Frameworks and Policy Implementation

Governments must play a critical role in ensuring fairness and transparency in AI decision-making. A robust regulatory framework should:

- Establish clear guidelines for fairness: Governments can create regulations that require companies to demonstrate that their AI systems are free from discriminatory biases.

- Promote transparency: Regulations should mandate that AI systems provide clear, understandable explanations for their decisions, particularly in high-stakes applications such as healthcare, criminal justice, and finance.

- Encourage independent audits: Governments should establish independent oversight bodies to audit AI systems for fairness, transparency, and accountability.

3.4 Continuous Monitoring and Accountability

AI systems should not only be designed with fairness and transparency in mind but also be continuously monitored after deployment. Regular audits and updates are necessary to ensure that AI systems remain fair and transparent over time. Additionally, accountability mechanisms should be established to hold companies and developers responsible for any unfair or biased decisions made by their AI systems.

4. Global Efforts to Ensure Fairness and Transparency in AI

Efforts to ensure fairness and transparency in AI decision-making are not limited to individual companies or governments but also extend to international initiatives aimed at creating global standards and guidelines.

4.1 The European Union’s Artificial Intelligence Act

The Artificial Intelligence Act proposed by the European Union is one of the most comprehensive regulatory frameworks aimed at ensuring AI’s ethical use. The Act establishes rules for high-risk AI systems, ensuring that they meet strict requirements for transparency, accountability, and fairness. The Act also includes provisions for regular audits and human oversight of AI systems, aiming to minimize the risks associated with AI decision-making.

4.2 The OECD Principles on Artificial Intelligence

The Organisation for Economic Co-operation and Development (OECD) has also developed a set of AI principles, which emphasize fairness, transparency, and accountability in AI systems. These principles provide guidance to governments and businesses on how to develop AI systems that align with ethical standards and promote the well-being of society.

4.3 The United Nations and Global AI Ethics

The United Nations has established

initiatives to promote global cooperation on AI ethics, with a focus on ensuring that AI technologies are developed in a manner that respects human rights and promotes social good. The UNESCO Recommendation on the Ethics of Artificial Intelligence is one such initiative, which aims to provide ethical guidelines for AI research, development, and deployment across nations.

5. Conclusion

Ensuring fairness and transparency in AI decision-making is crucial for fostering trust in these technologies and preventing the perpetuation of bias and discrimination. Both companies and governments must work together to develop ethical AI systems that prioritize fairness, transparency, and accountability. With proper regulatory frameworks, ethical design practices, and continuous monitoring, AI can be harnessed in ways that benefit society as a whole, while minimizing its potential risks and harms.